Training and Engineering Culture

We deliberately invest in team training because it directly affects project outcomes: the speed of feature delivery, deadline predictability, code quality, and maintenance costs. We read and discuss relevant books and articles, translate useful ideas into clear solutions, and document them so that any team member — including the client side — can understand the logic and consequences of the decisions made.

Why This Matters to the Business

Simply put: a systematic engineering culture reduces risks and makes product development cheaper over the long term. Here is how that manifests in practice:

Fewer incidents and missed deadlines

We structure delivery so that changes reach production in small batches and pass through automated checks. This reduces the likelihood of regressions and simplifies rollback if something goes wrong.

Faster from idea to value

Short delivery cycles and transparent release rules allow hypotheses to be tested faster, results to be visible in user metrics, and plans to be adjusted quickly.

Lower TCO (total cost of ownership)

Readable architecture, clean contracts between system components, and adherence to agreed patterns reduce "hidden interest" — the time spent on investigation and manual fixes.

Transparency and manageability

Project artefacts (ADR, Tech Radar, guidelines, metrics) make it clear why decisions were made, what risks were considered, and how this affects timelines and budget.

How We Learn (Formats and Their Purpose)

Learning for us is not a chaotic "whoever found something" process, but a managed system with clear formats. Each format addresses its own goal and produces an output artefact that benefits the project.

Book Clubs

(1–2 times per month)

We select a book, divide it into chapters, and hold a meeting for each chapter. Afterwards, we produce a summary and a draft ADR: which ideas suit our projects, where experiments will be needed, and what risks we see. This helps us not merely "read" a book but connect theory to specific client tasks.

Benefit for the project:

a shared language around the topic (architecture, fault tolerance, data handling) is established,

and a knowledge base of decisions accumulates that can be revisited months later.

Webdelo Tech Radar

(quarterly)

We maintain an internal "technology radar" with four statuses: Adopt (use by default), Trial (try on new tasks), Assess (studying, not yet adopting), Hold (not recommended). Each entry includes a justification for its status.

Benefit for the project:

it is clear why we recommend a particular library, database, or DevOps approach; there is no need

to argue "by taste" — we look at arguments and context.

Mentoring and Pair Programming

Experienced engineers run targeted sessions: designing a complex module together, demonstrating testing techniques, and analysing architectural trade-offs. This accelerates specialist growth and reduces bottlenecks in the team.

Benefit for the project:

fewer dependencies on a "single expert", higher average code quality, and faster code review.

Internal Seminars (Brown Bag / Tech Lunch)

Short 20–30-minute talks, demos, pull-request reviews, and post-incident analyses. An important rule — "no blame": we are interested in causes and improvements, not in finding fault.

Benefit for the project:

successful practices spread across teams faster, anti-patterns are documented, and the recurrence

of the same mistakes is reduced.

Dedicated Learning Time in the Sprint

We plan hours in advance for reading, experiments, and short research tasks (spikes). These hours are protected — as an investment in delivery quality.

Benefit for the project:

new practices are introduced not "on the side" but through managed steps and with immediately

measurable goals.

Principles of Critical Reading

(How We Select Ideas)

There is a lot of hype in IT. Our goal is to separate timeless principles from one-time trends. We evaluate every idea for applicability to your domain and budget.

Primary sources first

If a pattern is described in a book or talk by the approach's author, we rely on that, not on paraphrases. Fewer distortions that way.

Prototypes and constraints

Before changing architecture, we build a short prototype, measure, discuss risks, and plan a rollback. If the benefit is not clear, we defer.

Context over universal recipes

For example, microservices are beneficial when there are independent domains and a team ready for operational overhead. For a small product, a well-bounded monolith is faster and cheaper.

Documenting the decision

The output is an ADR with motivation, alternatives, risks, and success criteria. Six months later, any team member will understand why we made this particular choice.

Artefacts You Receive

We leave behind not only code but also clear decision documentation.

Architecture Overview + Context Map

A concise diagram of domains and responsibility boundaries between system components. It makes it easy to discuss changes with product and stakeholders.

ADR Package (Architecture Decision Records)

A set of cards for key decisions: which option was chosen, why, and what happens if conditions change.

Quality Gates

A set of automated checks: tests, static analysis, security, formatting. This is part of the pipeline, not a "wish list".

Tech Radar Snapshot

A brief overview of project technologies: what we use now and what alternatives were considered.

Runbooks / Playbooks

Clear instructions: how to release, how to roll back, what to do in typical incidents.

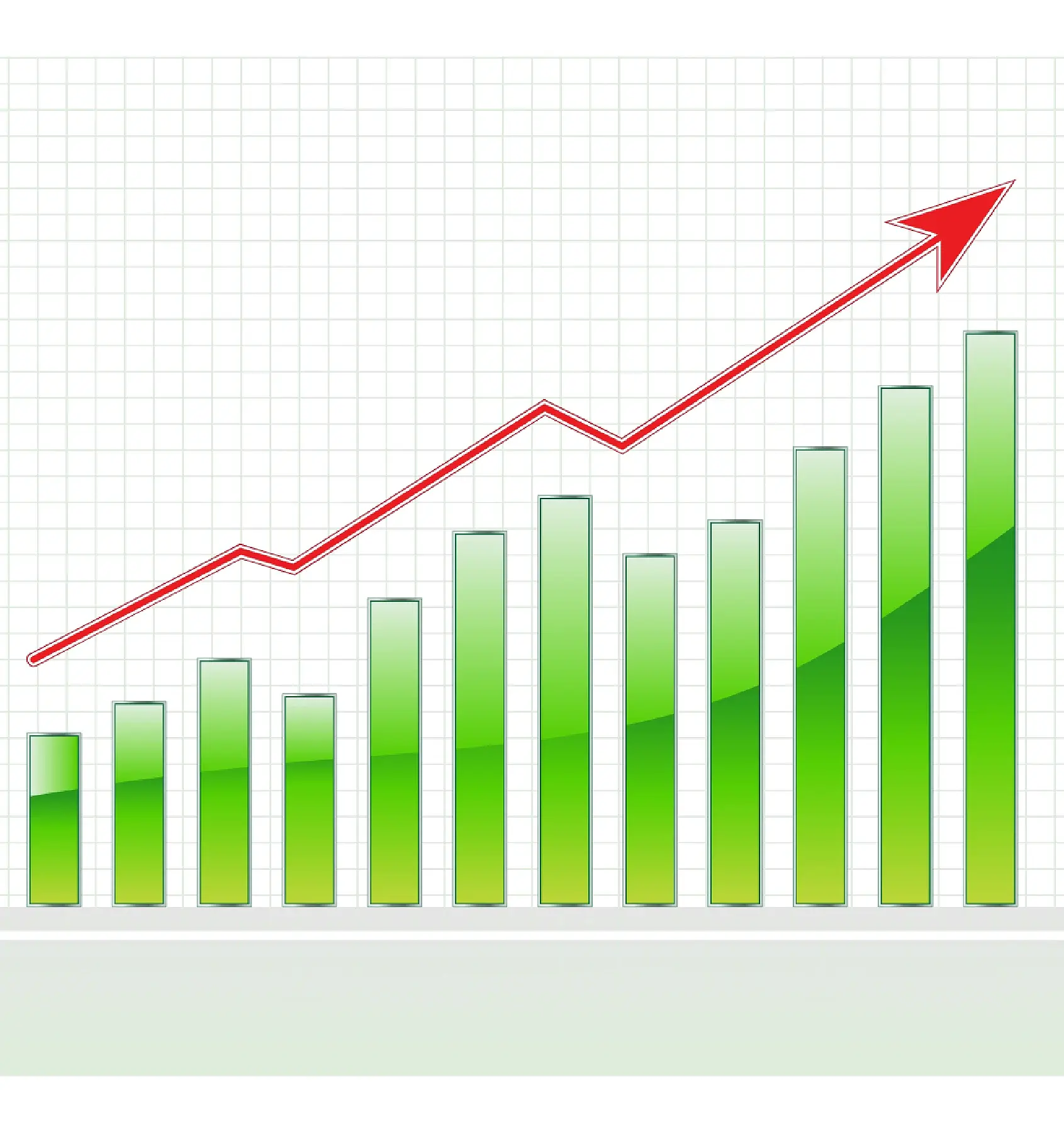

How We Measure Effect (Metrics)

We look not only at process but also at outcomes. There is a set of metrics that helps us understand whether we are moving in the right direction.

Lead Time for Changes

Time from a change being ready to its appearance in production. A short lead time means a fast feedback loop and lower risks of "stagnation".

Deployment Frequency

How often we release significant changes. Regular small releases are easier to control and roll back.

Change Failure Rate

The share of releases that lead to incidents and require intervention. We aim to reduce this through automated checks and gradual releases.

MTTR (Mean Time To Restore)

Average recovery time after a failure: the lower it is, the more resilient the process and architecture.

Additionally:

pull request size, automated test coverage, number of vulnerabilities, technical debt by module.

How to use this on your project: if no historical data exists, we start collecting from scratch, and after 8–12 weeks we can already show dynamics. This helps prioritise: what will deliver the greatest impact in the next quarter.

How Ideas Become Code (the path from discussion to implementation)

Discussion and ADR Draft

Short Experiment (Spike/PoC)

Limited Rollout

Scaling Decision

Examples: Book → Practice

Each such example is recorded in an ADR and an internal talk, so that the practice is not lost with people.

Release It!

(Michael Nygard)

The book teaches designing systems that withstand failures. We apply the circuit breaker pattern (limiting cascading errors) and bulkhead (partitioning resources into compartments) to localise problems and avoid bringing down the entire system.

Domain‑Driven Design

(Eric Evans, Vaughn Vernon)

The approach helps to separate independent parts of the domain. We define bounded contexts and clear contracts between them. This reduces coupling and simplifies independent releases.

Accelerate

(Forsgren, Humble, Kim)

The research links development practices to business outcomes. We use trunk‑based development , small PRs, and automated checks to increase release frequency without raising incident rates.

Team Topologies

(Skelton, Pais)

The book describes how to organise teams around the value stream. We combine stream‑aligned and platform teams to accelerate onboarding and reduce "hidden coordination".

The Role of Technical Leads and Mentoring

Tech leads are the "carriers" of engineering culture on the project. They translate business goals into architectural decisions and are responsible for delivery quality.

Architectural responsibility

The lead formulates architecture goals, agrees on trade-offs, maintains ADR, and ensures decisions do not diverge from the chosen strategy.

Mentoring and review

Individual growth plans, regular code reviews, pair programming. This reduces the risk of bottlenecks and accelerates the team's work.

Communication

The lead explains decisions in the language of risks, timelines, and cost. This way the business understands what it is paying for and what to expect on a quarterly horizon.

Risks and How We Manage Them

Risk of dogmatism

Every pattern is validated through experiment and metrics. If the benefit is not confirmed — we defer or roll back.

Risk of "learning instead of delivering"

Learning time is planned in advance and tied to project goals. Every initiative comes with measurable outcomes.

Accuracy of comparisons

We do not compare ourselves to specific companies. We compare approaches and choose those that provide value in your scenario.

What's Next

If you want to understand exactly how these practices will reduce risks and costs on your product, we can start with a short audit: in 2–3 meetings we will establish baseline metrics, outline the first improvements, and agree on a 4–6 week plan with an expected effect on timelines, quality, and cost.

Do you have a great project?

We'd love to discuss it with you!

Latest Articles

Stay up to date with new posts on our blog. We share useful content on SEO, programming, design, and digital marketing — everything you need to grow and develop your project.